Our August 2021 Facebook Ads Tests

Facebook Ads Guide

Every month, our expert team of Media Buyers performs a series of Facebook Advertising Tests to offer you expert tips on how to optimize your campaigns and strategies.

To be the first to receive our test results, subscribe to our iOS 14 Squad Newsletter! Click here to subscribe for free and begin taking advantage of our expert tips.

This is our new test from August 30.

During 2019 Black Friday, we made the decision not to go through a client's website to qualify their traffic for the sale.

Because this website was not very optimal...

Slow to open, too many buttons, a "complicated" presentation, a lot of black signals that led us to set up our own sales page and use only the bare minimum of the client, namely the checkout page.

We are well aware that this customer is not the only one experiencing this kind of inconvenience. That's why Facebook launched Instant Experience.

A native format that allows you to present the product you want via carousels, videos and clickable images.

The perfect tool to qualify our traffic while creating specific retargeting audiences.

We already use this ad format, but had not tested it yet. So we took the plunge to see if Instant Experience was a format we could use to better qualify our traffic (and thus sell more) than a standard campaign that links directly to the website.

These are the results with various clients as of August 30.

TEST #1 - ECOM CLIENT - 33 DAYS TESTING.

Context :

- Client : Ecommerce

- Audience : Lookalike and Stack Interest

- Objective : Conversion / Purchase / CBO

We launched an Instant Experience campaign to compete with our other campaign using standard ads.

We used the same visuals in both of them.

Results :

I.E.

- $1 573 spent

- 5.34 ROAS

- $14.57 CAC

Standard

- $3 298 spent

- 5.98 ROAS

- $13.52 CAC

After 33 days, the Instant Experience is still tied with our other campaign.

Conclusion : Even though this campaign did not win over the standard campaign, we were able to scale the client's account and generate just as much revenue. We are validating this test.

-------------------------------------------------------------------------

TEST #2 - ECOM CLIENT - 5 DAYS TESTING.

Context :

- Client : Ecommerce

- Audience : Lookalike and Stack Interest

- Objective : Conversion / Purchase / CBO

This client has a varied catalog of products, so we decided to test Instance Experience ads.

Results :

While we had purchases at $20/purchase during the period, the Instant Experience with $90 spent generated no sales.

Worse, it generated only 16 additions to the cart (5.62 / addition), while the other campaigns had a much lower cost per addition to the cart.

Conclusion : I do not recommend this test as it did not work at all for this client.

------------------------------------------------------------------------

TEST #3 - ECOM CLIENT - 49 DAYS TESTING

Context :

- Client : Ecommerce

- Audience : Lookalike and Stack Remarketing

- Objective : Conversion / Purchase / CBO

We tested an Instant Experience ad in the same campaign as other ads with a similar offer, with the goal of converting to purchase.

Results :

Instant Experience: 763.31 euros spent; ROAS = 2.80

Other ads: 984.06 euros spend; ROAS = 1.85

Conclusion : We recommend testing Instant Experience for an Ecommerce with an easy to understand product catalog.

-------------------------------------------------------------------------

TEST #4 - ECOM CLIENT - 2 DAYS TESTING.

Context:

Client : Ecommerce

Audience : Lookalike and Stack Interest

For a grocery e-commerce, we relaunched an Instant Experience that had already been tested in the past (February) with relatively average results. The budget was $100/day.

Results:

Total Failure after 2 days and $164 spent, no sales were generated, not even an add to cart, 0.31% CTR, I stopped the campaign.

Conclusion: Inconclusive.

-------------------------------------------------------------------------

TEST #5 - ECOM CLIENT - DAYS TESTING.

Context :

- Client : Ecommerce

- Audience : BROAD

- Objective : Conversion / Purchase / CBO

We tested the Instant Experience format with the brand's two most popular collections against still images from those same collections in the same target audience.

Result : Over a period of more than a week, no purchase was generated, the creative failed to gain the upper hand over the still images with more learning.

Conclusion : It should be tested in a new campaign.

-------------------------------------------------------------------------

The situation is interesting because if the Instant Experience does not work, you'll know right away. No need to wait a whole week, in 2 days you will have the answer.

Otherwise, you can easily run this campaign for more than a month! This way you can scale the account, increase your conversions and hot audience volume (both visitors and engagement with the website). Because remember, you can directly retarget people who have interacted with your Instant Experience.

We recommend you to try it yourself, in a separate campaign or in an existing one if you do not have extra budget!

Broad Audience : July 16th, 2021

As promised, here are the results (as of July 16th, 2021) of our latest test.

Among all of the issues brought about by iOS 14, the ones that troubled us the most were those related to Lookalike audiences.

With iOS 14 looming over us all, we immediately noticed that our Lookalike audiences simply weren’t converting as much as usual...

...and there’s a logical explanation for that!

With the Facebook Pixel losing performance points, Custom and Lookalike audiences were bound to follow suit. That’s why we asked ourselves the following question: Wouldn't Facebook perform better without any targeting limits in place?

The platform can’t offer up all of the information we need...but that doesn’t mean it can’t access it regardless.

With that in mind, we decided to test Broad audiences for several client campaigns – no interest-based or geographical specifications. We wanted to see if Facebook would be able to outperform one of its most important tools.

Here are the results as of July 16th, 2021.

----------------------------------------------------

TEST #1 - E-COM CLIENT - 10-DAY TEST.

Context: The first client we launched this test for was an e-commerce client (that sells only 1 product) for a Conversion (Purchase objective) campaign. We tested our Broad audience against 2 Lookalike audiences of prospects that had already converted (3% and 6%).

RESULTS: The Broad audience held up for about 10 days and used up $9,200 in budget (64% of our total budget), generating a CAC of $71.06. The two other audiences hit CACs of $53.58 and $37.97. respectively. The limit set in place by our client: $55.

Conclusion: Inconclusive test.

----------------------------------------------------

TEST #2 - LEAD GEN CLIENT - 1 MONTH TEST.

Context: For this Lead Gen client, we tested a Broad audience against 2 large interest-based audiences.

Results: Very quickly, the Broad audience received the majority of our budget, hitting a CPL of €13.1 for over €2,900 in spend. In comparison, our highest-performing audience for this client hit a CPL of €11.39 for €1,400 in spend. Globally, the Broad audience was certainly more expensive, but it kept getting better and better week after week. The month after we initially launched this test, the broad audience hit a CPL of €12.13, making it the highest-performing audience for this campaign thus far.

Conclusion: This test certainly proved to be more nuanced. The Broad audience initially seemed less effective, yet it allowed us to scale the campaign by over 76% in just one month. We were thus able to centralize all of our efforts during a period in which all of our other audiences were becoming more costly.

----------------------------------------------------

TEST #3 - E-COM CLIENT- 20-DAY TEST

Context: For this client, we decided to pit a 5%LK audience (previous buyers) against a 100% Broad Audience with no specific targeting filters (interest, location, etc.) for a Conversion campaign with a purchase objective.

Results: During the first few weeks, both ad sets performed quite well and generated similar CPAs. Admittedly, the Lookalike ad set achieved a better ROAS. However, shortly after, the campaign ran out of steam, and we saw a drop in results for both ad sets.

Conclusion: Upon reflection, both audiences work well together. One generates a profit while the other provides us with plenty of new customers for our retargeting campaigns. We recommend this test!

----------------------------------------------------

TEST #4 - E-COM CLIENT- 7-DAY

Context: We tested a Broad audience against a 3% LAL Purchase audience. The campaign also had a stacked remarketing audience.

Results: The Broad audience received a budget of €2,185, compared to the 3% LAL Purchase audience, which received a total of €1,158, achieving a ROAS of almost 1 point greater.

Conclusion: We recommend this test! It outperformed one of our best audiences for this client.

----------------------------------------------------

TEST #5 - LEAD GEN CLIENT - 14 DAY TEST.

Context: For this client, we tested a Broad Audience against a 3% Lookalike audience of prospects who had previously registered for our client’s webinar. The campaign also had a stacked remarketing audience.

Results:

- Broad audience: €3,023 budget ; CPL = €26.06

- LAL audience: €4,158 budget ; CPL = €21.66

Conclusion: We won’t be performing this test again for this client.

----------------------------------------------------

TEST #6 - E-COM CLIENT - 14 DAY TEST.

Context: For this subscription-based e-commerce client, we tested a Broad audience against an Interest-based audience that historically has worked very well for us.

Results:

- Broad Audience: $1,599 budget ;

- Interest-based Audience: $2,115 budget ;

The Interest-Based audience’s ROAS was slightly higher than that of the Broad audience, but not by much.

Conclusion: Inconclusive test for both audiences. We would have to redo this test using another campaign and offer.

----------------------------------------------------

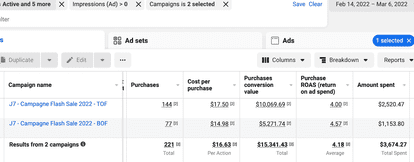

TEST #7 - E-COM CLIENT - 7-DAY TEST.

Context: For this e-commerce client, we put a LAL audience against a Broad audience (no interests) using CBO. Except for the audience, all other campaign elements were identical

Results :

Lookalike Audience:

- Investment: $9,060

- Purchases: 41 at $220 each

Broad Audience :

- Investment: $10,696

- Purchases: 48 at $222.84

The Lookalike audience’s ROAS was slightly higher than that of the Broad audience, but not by much.

Conclusion : Inconclusive test – both audiences yielded very similar results.

----------------------------------------------------

TEST #8 - E-COM CLIENT - 7 DAY TEST.

Context: We have an Evergreen campaign for this client that has been running for several months. The campaign promotes the client’s products (at full price!), with the products changing every season. We’ve also been testing a Broad audience versus a 6% Lookalike audience - Buyers for several months now.

Results:

BROAD

- Amount Spent: $6,823.71

- ROAS: 3.07

- Purchases: 182

- CAC: $37.49

LOOKALIKE 6%

- Amount Spent : $13,413.22

- ROAS: 2.54

- Purchases: 249

- CAC: $53.87

Conclusion: Upon reflection, we can safely say that this test worked. We recommend testing a broad audience vs. your best Lookalike audience. This will allow you to reach two different audiences over a long period of time, if needed.

---------------------------------------------------

We also conducted 4 other tests, which yielded the following results:

- 2 passed the test (Clients: E-commerce & Infopreneur)

- 1 failed (Client: Lead Gen)

- 1 was inconclusive (Client: E-commerce)

As you can see, we were very curious to see if Broad audiences would be a saving grace for us, which explains why we conducted so many tests.

Based on the results we presented to you today, we will continue to test Broad audiences for different clients and campaigns and we encourage you to do the same!

In our opinion, the best way to determine whether Broad audiences will work for you will be to put audiences that already work well for you (that you use regularly) in direct competition with new Broad audiences.

Lead Ads : July 9th

Here are the results of our latest test as of August 9th, 2021.

It all began on July 13th, when our Account Manager at Facebook mentioned this particular test during our call.

DABA a.k.a Dynamic Ads Broad Audience.

Like many of you, we already use DPAs, especially for retargeting campaigns. We’ve even launched DPA campaigns for acquisition, but never dug too deep into the matter.

Yet, during our call, Facebook recommended we test DPA campaigns further, as they’ve noticed many advertisers succeed using this strategy.

But, can a DABA campaign work if it’s not for retargeting?

From what we gathered from our call, DABAs use both data from your website and otherwise. The data collected from your website helps determine which products generate the most purchases, while the data collected from external sources helps identify an audience with a strong purchase intent.

With this info in mind, we launched a number of campaigns with broad audiences, excluding retargeting audiences completely.

Here are the results of our tests for various clients as of August 9th, 2021.

------------------------------------------------

TEST 1 - E-COM CLIENT- 3 DAY TEST.

Context: We started off slow for this e-com client, choosing to invest $50/day. A company that sells children’s toys, this client only has 4 products in their catalog. We used a cold broad audience (USA), set a Purchase objective and, as usual, used CBO.

Results: 0 purchases after spending $134.99, 3 add to carts for $45/add to cart, a CPM of $22.33 and a CTR of 0.79%

Conclusion: Inconclusive, but this client was not the ideal client for this test, as their product catalog is very limited.

-------------------------------------------------------------------------

TEST 2 - E-COM CLIENT- 14 DAY TEST.

Context: This time, we tested out this approach for a very niche client that sells very expensive products. During the month of July, our TOF campaigns generated add to carts for $338 and purchases for $487.

We launched a DABA campaign using a low budget, and after the first week, the campaign generated add to carts for $25.89 and purchases for $129.

We quickly stopped our 2 TOF campaigns to launch a new DABA campaign with a much larger budget. A mistake...our CAC jumped up to more than $1000.

Results:

DABA Broad Purchase:

- $2,400 spend

- $605 CAC

- 14 day test

TOF Purchase:

- $16,500 spend

- $487 CAC

- 28 day campaign

- A ROAS 0.56 points higher

Conclusion: We do not recommend this test for niche clients with very expensive products.

-------------------------------------------------------------------------

TEST 3 - E-COM CLIENT - 30 DAY TEST.

Context: For a client that sells clothing for babies, we launched a DABA campaign in April (DPA + BROAD audience)

Results:

- Spend: $2,467

- Cost per purchase: $35.24

- ROAS: 2.61

- CPM: $11.38

Conclusion: We strongly recommend this test. This campaign is still running!

-------------------------------------------------------------------------

TEST 4 - E-COM CLIENT - 6 DAY TEST.

Context: This client is an e-commerce company that sells American candies, sodas, and snacks.

We tested out a DABA audience for a Collection campaign using CBO – retargeting audiences excluded (+optimized for Purchases).

At the same time, we also tested out a standard TFO campaign using a broad audience and CBO (+optimized for Purchases).

Results:

DABA: ROAS = 2.15; Spend = 410 euros

*Interestingly, out of all of the ads in our ad set, Carousels generated the most profit with a ROAS of 3.04!

Standard Broad: Spend = 1,046 euros with a ROAS of 0.58 points less than the DABA

Conclusion: We recommend this test and strongly suggest you try a Carousel for this DABA campaign.

-------------------------------------------------------------------------

As you can see, this week’s test was very interesting. It resulted in 2 failures:

1.An e-com that sells very expensive products in a very niche market.

2.An e-com that only sells 4 products, resulting in limitations in distribution.

On the other hand, we had success with 2 e-commerces that sell popular products:

- Products everyone understands/is familiar with.

- Can be sold to a greater number of people.

Both e-coms also have healthy product catalogs (which is great for DPAs!) that can apply to various audiences.

If you’re a company that sells clothing, beard products, or even food, for example, we think it’ll be safe for you to replicate this test.

On another note, it would also be interesting to test out this approach using a specific set of products in order to not put all of your products to the forefront and keep your ads consistent.

If you know someone else who’d be interested in our newsletter, feel free to share this email with them or direct them to our subscription page here.